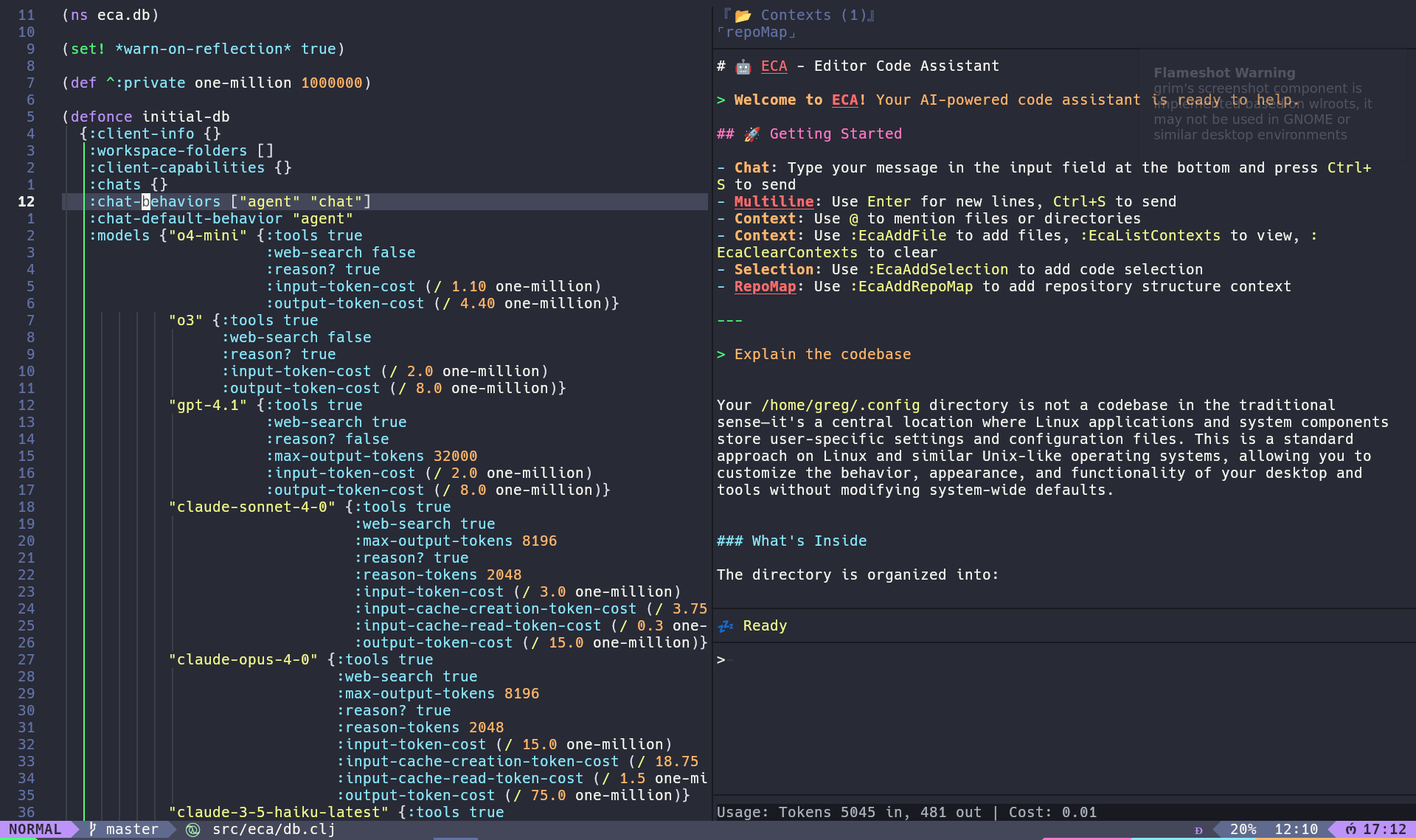

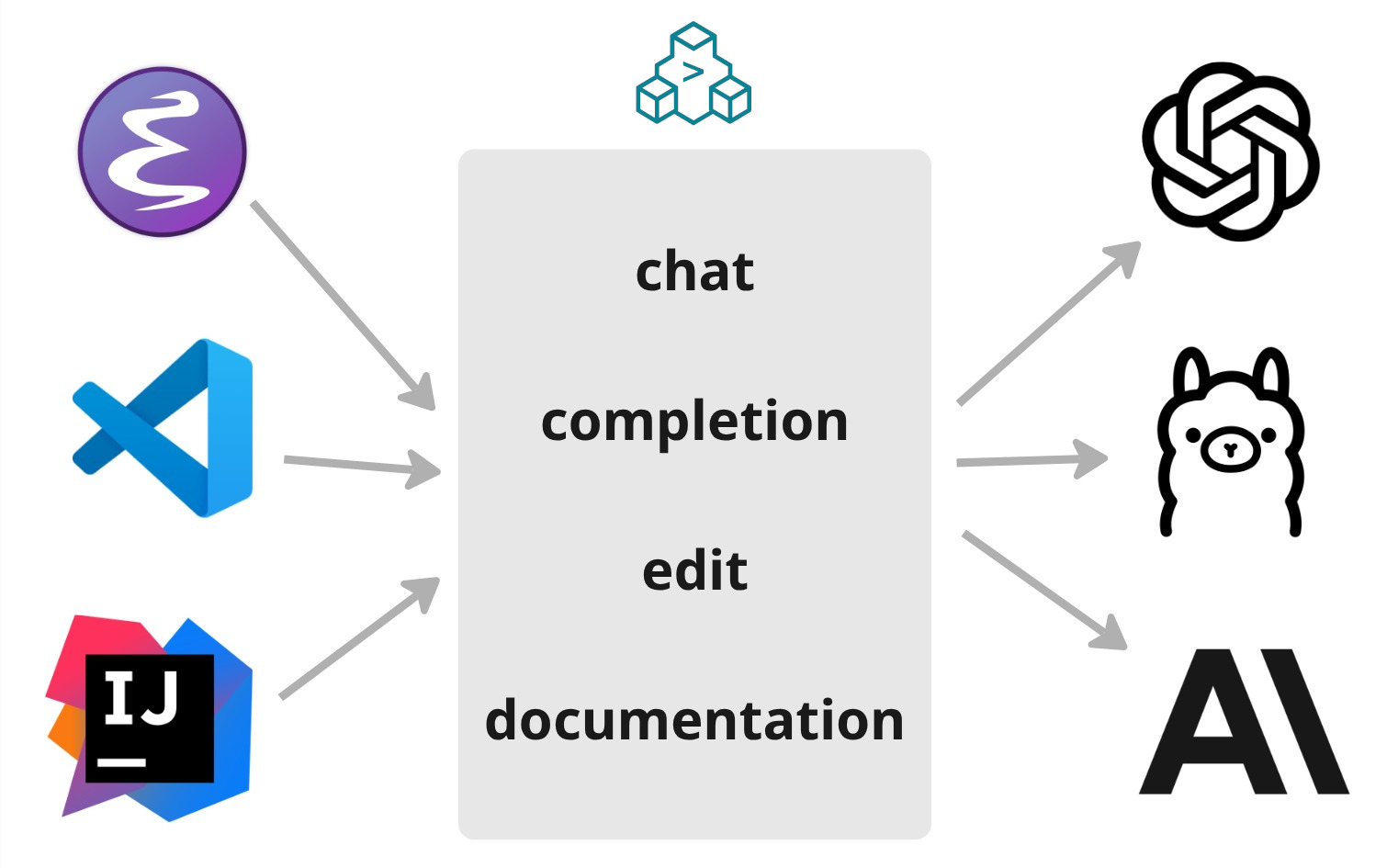

Editor Code Assistant

AI Pair Programming

in Any Editor

A free, open-source, editor-agnostic tool that connects LLMs to your editor through a well-defined protocol — giving you the best AI coding experience everywhere.

Why ECA?

A protocol-first approach to AI coding — one server connecting any editor to any LLM.

For ones interested in being in the loop inside your editor.

Major Features

Three core interactions powered by LLMs, plus a rich ecosystem of configuration and extensibility.

Chat

Pair program with an AI agent that can read, write, and refactor your code. Supports rollback, context injection, and multi-turn conversations.

Learn more ✏️Rewrite

Select code, describe the change, accept or reject the diff. Fast, low-ceremony edits that work great with lightweight models.

Learn more ⚡Completion

Inline AI-powered code suggestions as you type — multi-line, context-aware, similar to Copilot-style predictions.

Learn moreAgents & Subagents

Built-in code and plan agents, with subagents for isolated parallel tasks and context window savings.

Tools & MCP

Native file/shell/editor tools, MCP server integration, and custom tool definitions with fine-grained approval control.

Context

Attach files, directories, cursor position, MCP resources, or AGENTS.md auto-context to any prompt.

Rules

Define coding standards, conventions, and constraints the LLM must follow — globally or per project.

Skills

Structured knowledge units that teach the LLM how to handle specific tasks. Follows the agentskills.io spec.

Commands

Slash commands like /init, /compact, /resume, and custom commands you define yourself.

Hooks

Before/after event callbacks to validate, notify, or trigger side effects on tool calls and prompts.

Variants

Switch between configuration presets on the fly — different models, reasoning effort, or custom setups.

Metrics

OpenTelemetry integration for exporting tool usage, prompt performance, and server metrics.

Plugins

Extend ECA with community plugins — bundles of tools, rules, skills, and more from the marketplace.

Remote

Observe and control your chat session from a browser via an embedded web server and web.eca.dev.

One Config To LLM Them All

Everything is driven by a single JSON config. Here are a few things you can do.